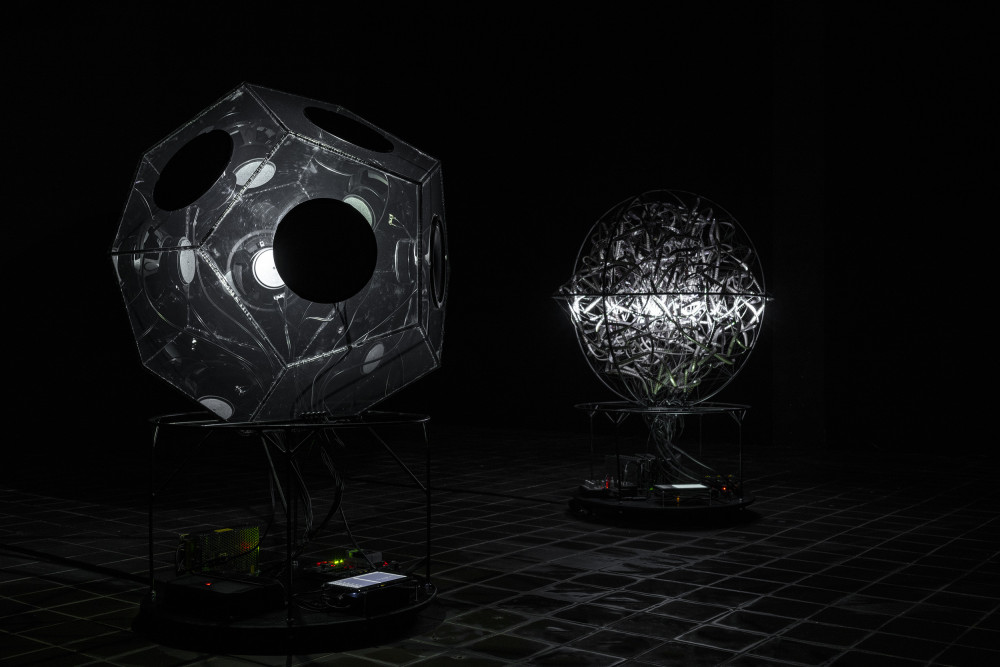

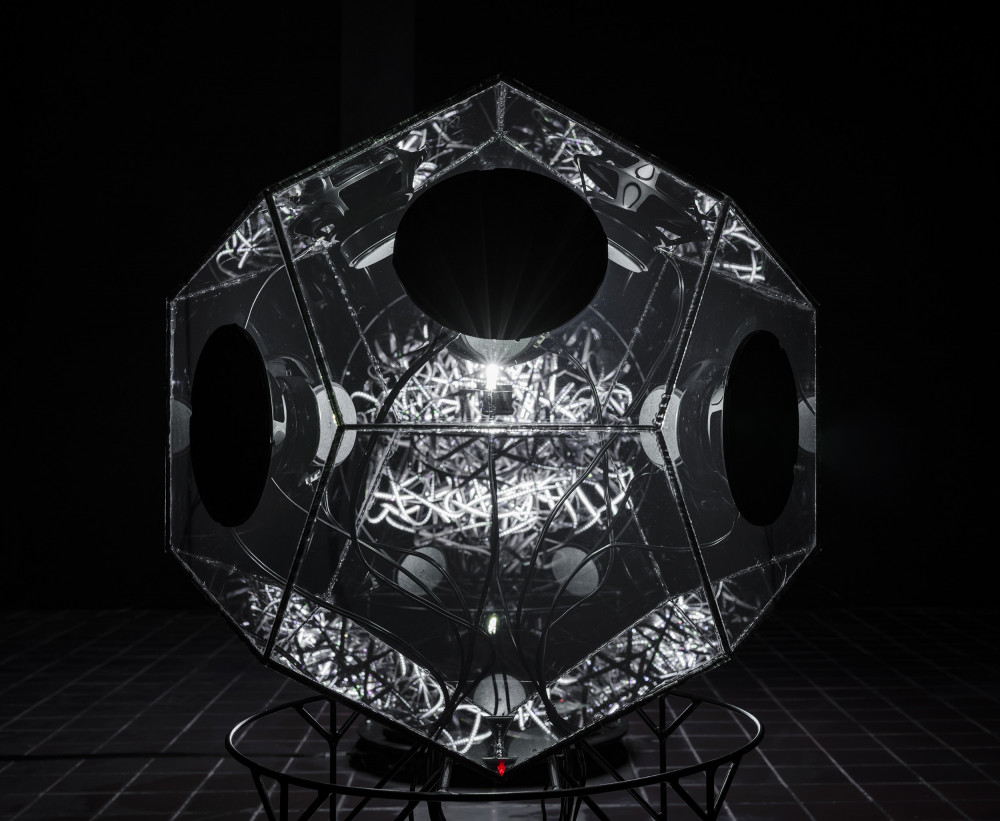

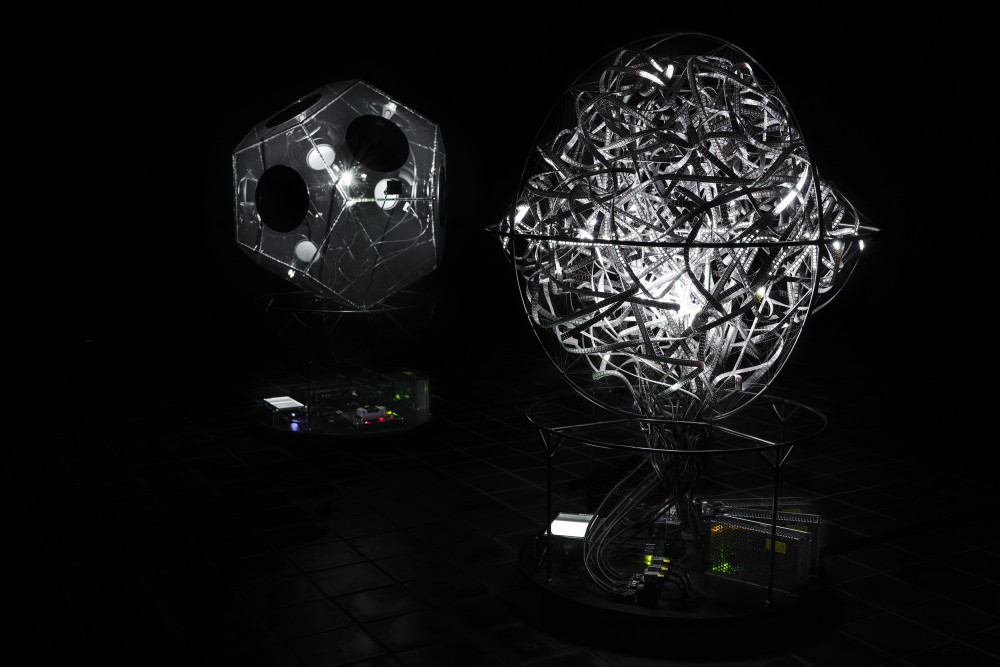

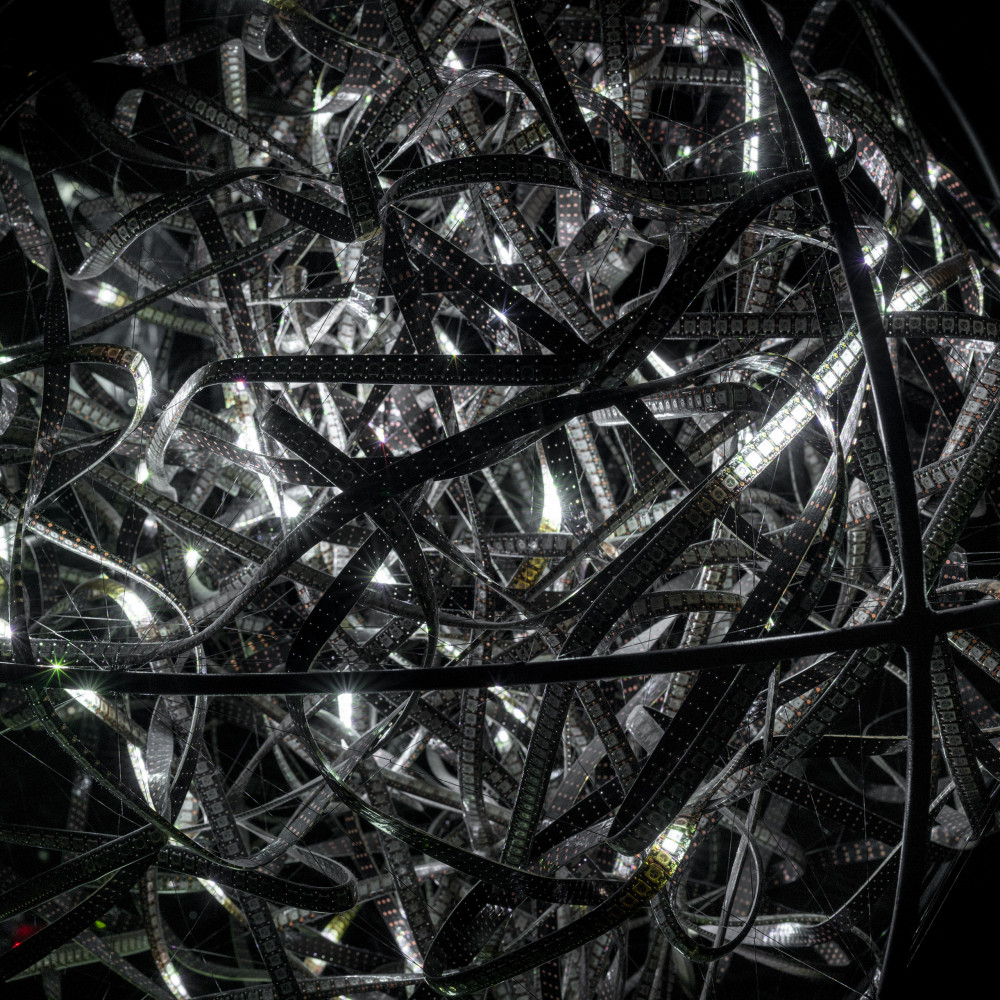

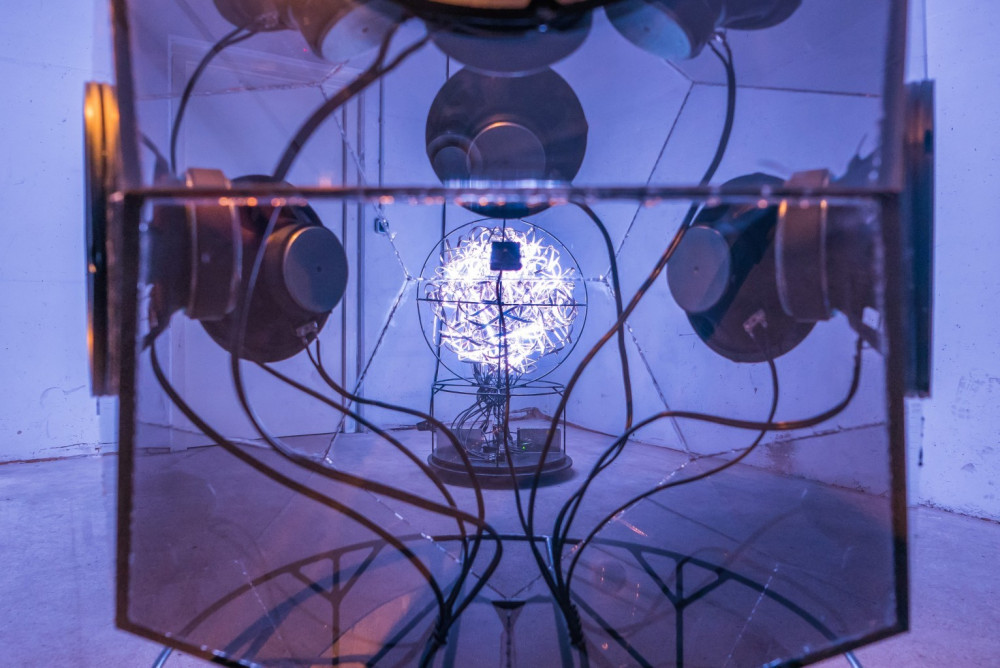

This work consists of a series of aesthetic experiments designed to make processes of artificial neural networks perceptible to humans through audiovisual translation. Exp. #1 examines inner behaviour during the prediction and learning process. The network is visualised by a light object, with the state of each neuron represented by a corresponding LED. Exp. #2 questions an AI’s capacity for empathy and purpose while communicating with a second AI.

The spherical light object with a diameter of 80cm consists of a chaotic heap of 95m LED Stripe, a microphone, and an embedded AI computing device. It can hear sounds and create images. The sound object in the form of a dodecahedron of black, opaque plexiglas with the same diameter is equipped with eight speakers, a camera, and the second AI system.

Both systems are embodied by a light or sound object and can receive the messages of the other. To have a meaningful conversation, both objects produce empathic behaviour by understanding the received messages and giving an intentional response. Together they enable an ever-changing audiovisual conversation.

Exhibited at (selection):

TEMPLE, 25. Juni - 12. Jul 2020, Westflügel Leipzig

re:publica campus, 6. Sept - 4. Oct 2020, Berlin